Presentation to Irish Philosophical Association Annual Conference, University College, Dublin, Nov. 2018

I will examine ways in which human autonomy can be threatened by emerging technologies.

Autonomy is a fundamental value in modern ethical systems, serving as the basis for derivative values such as freedom and dignity (Schneewind 2007; Dainow 2017). Considering the impact on autonomy is therefore a common starting point for an ethical assessment of a new technology. However, it is less well recognised that there are multiple competing accounts of what autonomy is (Dworkin 1988; Anderson 2014; Dainow 2017). I will explore the ways in which three sample emerging technologies, personalisation, bio-electronics, and robotics, interact with four accounts of autonomy. It is not my intention to defend any particular account of what constitutes autonomy. Instead, by exploring the interaction between different accounts and different technologies I hope to enhance our understanding of both autonomy and ethical assessment of new technologies.

Modern treatments of autonomy derive from Kant’s original conception . Kant was the first to apply the concept of autonomy to human beings (Schneewind 2007). Before Kant, the term was a political one. A state was said to be “autonomous” if its laws were drafted within that state, as opposed to being drafted by a distant imperial court. Kant applied the term to the individual in Groundwork of the Metaphysics of Morals (Kant 1998). His aim was to justify the right of each individual to make their own judgements regarding morality, in contrast to the then politically dominant understanding of ethics, in which ordinary people were seen as too weak-willed to act morally without threats of punishment and promises of reward. Kant set out to demonstrate that each person drafted their own rules for their own conduct, that these rules constituted each person’s moral code, and that no education was required for this ability – it was universally present in all humans. Kant labelled the innate capacity of all humans to determine what was morally correct as ‘autonomy.’ He then used this capacity for autonomy as the basis for human dignity, which then became the basis for human rights.

The Groundwork laid down very strict procedures for thinking, such as the need to avoid considering how one may personally benefit. These are now known as the formulations of the Categorical Imperative. These are fine, if debatable, so long as we remain limited to moral autonomy. However, modern thought has broadened the concept to political and personal autonomy, often interlinking with authenticity along the way. The thinking procedures described by Kant for moral autonomy do not make sense for personal or political autonomy, and so a number of different conceptions of what constitutes autonomy have arisen to fill the gap.

Kant’s definition of autonomy has come to be known as a “substantive procedural” account. A “procedural” account holds that autonomy is a form of thinking which follows a specific procedure. A subset of procedural accounts are substantive, adding the requirement that this specific thinking procedure only consider certain things. By contrast, “content-neutral” procedural accounts try to define autonomy without reference to any particular concern or aim. Content-neutral accounts of autonomy form the majority of modern accounts (Buss and Zalta 2015).

We do not have the time to look into the details of specific accounts of autonomy. The general pattern is that when autonomy was expanded into personal autonomy by influential accounts, such as those of Dworkin (Dworkin 2015) and Frankfurt (Frankfurt 1988), some felt Kant’s heritage had led to an unrealistic assessment of what it meant to be human. In particular, many reacted against the isolationist, or individualistic, view of human valuing presented in these early modern procedural accounts (Christman 2015).

For example, Dworkin defined autonomy as the capacity to reflect on one’s values and change them as a result of this examination. His original position was, like Kant’s, hostile to external influence, and helped spur the rise of relational accounts, which do not see social influences as undermining autonomy. Relational accounts centralise the role of relationships in human life and weave social considerations into the essence of what it is to be human. Under such accounts, autonomy depends on the right sorts of social influences, though they vary according to the details of what constitute permissible social influence (Mackenzie and Stoljar 2000).

Autonomy involves considering things in the light of personally-held values. But do you need to have acquired those values autonomously in order for them to generate autonomous decisions? If you did not acquire those values autonomously, can decisions made on the basis of those values be considered autonomous?

Two samples will illustrate alternative approaches to this question. Laura Ekstrom’s approach is to adapt coherentist epistemology, replacing propositions with values (Ekstrom 1993, 2005). Under her account, values and decisions are autonomous if they are coherent with the rest of one’s values. She does not demand that these values have been acquired in any particular manner, merely that they do not lead one to self-contradiction. A popular alternative is John Christman’s relational autonomy, which focuses on the methods by which values are acquired (Christman 2004). His position is that autonomy does require values be acquired autonomously, and that this requires the individual had access to alternative values at the time of acquisition.

It has also been argued autonomy, if conceived of as only certain forms of thinking, does not account for the full range of human emotional and physical experience, such as the trained muscle action we see in playing sport or music) or healthy reflexes, which can also express personal autonomy. Diana Meyers has responded to this concern with an account of autonomy which provides a place for such healthy non-cognitive operations of the human body (Meyers 2005).

On the other hand, Reinhold Niebuhr’s Christian Realist position holds that neither conscious cognition nor healthy reflexes, nor the combination of the two, is sufficient to provide human autonomy (Niebuhr 2004). His position is that either will inevitably lead to some form of incoherence. His position is that the human does not have the capacity to balance impulses towards either materialism or idealism, and must inevitably loose individuality in one while diminishing the other. In his account, only the action of spirit can harmonise the poles of the material and the cognitive and this is only possible through Christianity, and so autonomy is only achieved when one lives in accord with Christian faith.

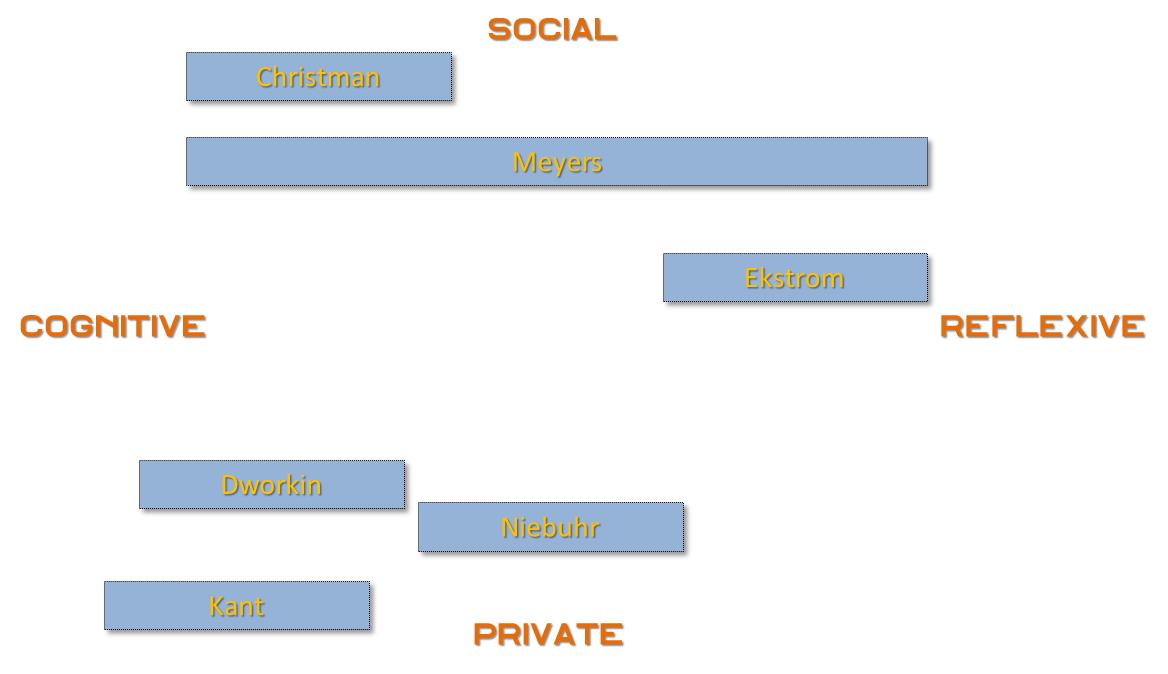

We can arrange the different accounts on two axes – cognitive VS reflexive and social VS private. Accounts such as those of Kant and Dworkin sit in the extremes of cognitive/private pole.

Let us now turn to emerging technologies. I want to discuss three technologies – personalisation, bio-electronic implants and robotics.

Personalisation refers to changes in a technology system to meet a single person’s characteristics. This includes news feeds customised to your taste, as we see in Facebook (Stoycheff 2016), search results tuned to you by Google (Dillahunt, Brooks, and Gulati 2015), through to smart houses which adapt to the inhabitant’s tastes (Al-Hader et al. 2009).

The ethical issue for autonomy arises around who the personalisation serves and who has control. It is an issue of power. Where personalisation is under control of the user, it represents an extension of their autonomy under any account of autonomy. Where personalisation is outside the user’s control, as is universally the case today, it represents a reduction in autonomy under some, but not all, definitions. Some accounts, especially those on the internal and private end of our axes, such as Dworkin’s, allow for post-hoc consent, such that autonomy is preserved if the person would have agreed to the personalisation, had they been given the chance to do so at the time. Autonomy is also preserved by automated personalisation under Ekstrom’s coherentist account, if the personalisation is coherent with the person’s values and aims. In both cases we see autonomy being judged on the outcome, not the process. By contrast, under Christman’s view, and to a certain degree under Niebuhr, it is the process which is problematic, irrespective of outcome. Christman’s relational autonomy requires the availability of genuine alternative choices. This requires knowledge of the algorithms underlying the personalisation, as well as the availability of alternatives. From this perspective, any personalisation which is not understood represents a reduction in autonomy, irrespective of outcome. Furthermore, even if understood, and all outcomes agreed to, it still represents a reduction in autonomy if alternatives are not available.

More pressing today is personalisation of one’s information environment. Sociality is an important part of autonomy for many. It relies on commonality of binding elements, such as shared understanding of the world, common language, and so on. Personalisation of information is recognised today as risking the generation of a “filter bubble” – an information environment whose cognitive content has been so tuned to an individual’s characteristics it no longer accurately represents reality.

Filter bubbles are not a reduction in autonomy under Ekstrom’s coherentist account because the elements of the filter bubble are coherent with each other. Whether it is a reduction of autonomy under Dworkin’s procedural account depends on whether one is happy with the filtering process. Where one does not know it is happening or how it works, as is the case today, one cannot knowingly assent and autonomy is therefore restricted. A similar concern applies under Christman’s relational autonomy because he demands both knowledge and an alternative. However, if users were able to understand and configure their filtering, it would represent an extension of personal autonomy under most accounts. However, for Niebuhr, the entire filtering and personalisation process is an exercise in unbalanced idealism, and information selection should always be undertaken by the individual themselves.

Bioelectronics are systems which interact directly with the human body, mainly focused on use in health care, such as pacemakers and smart skin (Ikonen et al. 2010). Bioelectronics directly confronts the essence of what it is to be human and thus impacts directly on accounts of autonomy based on conformance to an authentic human nature, as we see in Niebuhr.

Bioelectronics can change internal bodily states in the same manner as drugs, so bioelectronics shares many of the same concerns. The relation between autonomy and drugs has been extensively examined in medical ethics, so I will skip those issues in order to can focus on issues unique to bioelectronics.

The aspect of autonomy affected by bioelectronics relates not to outcomes or processes of self-governance which we saw in personalisation, but the nature of the self doing the governing. An important ethical concern in this respect arises from the conditions of use. Under current legislative regimes, implanted devices such as pacemakers either remain the property of the manufacturer or are sold under restricted terms of use. As software devices, they are subject to intellectual property protection and so the manufacturer controls how and when the devices are maintained, and by whom. It took the US government two years to force St Jude Medical to patch faulty software in one-quarter of a million pacemakers, during which time they were known to be potentially fatal. St Jude (since renamed as ‘Abbot Medical’) resisted out of fear of bad publicity (Wendling 2017). The people in whom the pacemakers were implanted did not have any control over the device and were forbidden under law from having it fixed themselves.

The issue for autonomy is the degree to which such implanted devices constitute part of the self. Some may see them as external objects, no different than a wrist watch. Others may regard them as part of their bodies, and thus part of themselves. These people will therefore find themselves in a position where another owns, or at least controls use of, part of their own self. At that point, by definition, autonomy has ceased.

Here we see it is neither the process nor the outcome which implications for autonomy depend on, but the nature of the self doing the governing.

It also shows that, except for some authentist accounts, this technology is ethically neutral – the concern lies in the way it is integrated into society. When the ethical issues derive from the manner of ownership, it is not the technological functionality which has ethical import, but the social factors around it. We saw the same thing with personalisation – the issue was ownership and control. However, as we shall see with robotics, it can be the technology itself which is the issue.

So, let us now consider robots.

As has been noted earlier, procedural accounts of autonomy like Dworkin’s have been criticised for portraying people as isolated individuals and leaving no space for social factors. We have seen that a common response has been to develop a treatment of autonomy which includes space for the influence of others. Central to relational accounts of autonomy is accepting the role of other people in the development of one’s values and that it is not possible to function without the influence of others. Such conceptions of autonomy are threatened when robots replace humans in roles which have social externalities, such as health care and education. While robots are able to undertake the central tasks, the loss of human contact constitutes a reduction in relational autonomy. The consequence of this is that there may be some tasks for which robots are not ethically suitable, for no other reason than that they are robots. If the social externalities of a work role are essential for the maintenance of the recipient’s autonomy, then that role must be reserved for humans. Were we to replace nurses or teachers with robots, we could see people argue this constitutes a violation of their human rights.

In our previous accounts we have shown how focusing on the outcome vs the process is what determines whether autonomy is restricted. We have also seen it can depend on the definition of the self. Robotics shows a another approach. Here it is not the process, the outcome, or the self, which is of concern, but the values held within each individual’s autonomy. Where human contact is considered an essential part of accessing a service, autonomy is restricted if it is delivered by a robot. Here the issue is not the central task, but the mechanism by which it is done. Replacing people with robots in social situations deprives people of a means of human contact. This is acceptable to Christman only if non-robotic alternatives are available. It is acceptable to Ekstrom, and possibly Dworkin, only if social externalities are not important to the individual in question. Niebuhr’s position is a little more complex, and it is not easy to determine, per se. I think his position would be that, since love is the one factor which prevents society being totally sinful, and since robots cannot love, they are not fit to replace humans in social roles. Thus metaphysical beliefs about the mechanical become central, once again showing that autonomy’s relationship with robotics depends on one’s internal values, not the form of autonomy held to.

We have explored four different accounts of autonomy under three different emerging technologies. We have seen that the perception that autonomy is restricted can vary according to whether autonomy is seated in process or outcome, and that this can lead to opposing positions. We have also seen that this can be irrelevant, and that restriction can be dependent on how one views the self or simply what one’s values are.

The conclusion is that emerging technologies, except in extreme cases, are not definable as either autonomy restricting or autonomy promoting. It is possible for the same technology, used in the same way, to be considered both restricting and liberating, according to the form of autonomy each individual holds to. This matter is complicated by the fact that different accounts focus on different aspects of the situation. Resolution of such debates requires understanding the ethical assessment of a technology is not a matter of examining the technology, or its effects, but its coherence or otherwise with the range of views users may hold.

Yet efforts to preserve autonomy are central to technology ethics. Efforts to reduce the negative ethical impact of technologies on people through processes such as value-sensitive design need to be cognisant of the various conceptions of autonomy relevant to the intended functionality of the system. Such approaches generally hold the solution is to specify a particular brand of ethics, such as deontology or utilitarianism, from which to draw values, but the application of such values still depends on the concept of autonomy being used. Such accounts usually assume that there is a single, fixed meaning to autonomy. Efforts such as value-sensitive design stand more chance of success if they focus less on incorporating a single set of values and focus instead on ways by which users may adapt technologies to reflect their own individual version of autonomy.

REFERENCES

Al-Hader, Mahmoud, Ahmad Rodzi, Abdul Rashid Sharif, and Noordin Ahmad. 2009. “Smart City Components Architicture.” In 2009 International Conference on Computational Intelligence, Modelling and Simulation, 93–97. IEEE.

Anderson, Joel. 2014. “Regimes of Autonomy.” Ethical Theory and Moral Practice 17 (3): 355–68. https://doi.org/10.1007/s10677-013-9448-x.

Buss, Sarah, and Edward N. Zalta. 2015. “Personal Autonomy.” Stanford Encyclopedia of Philosophy. http://plato.stanford.edu/entries/personal-autonomy/.

Christman, John. 2004. “Relational Autonomy, Liberal Individualism, and the Social Constitution of Selves.” Philosophical Studies 117 (1): 143–164.

———. 2015. “Autonomy in Moral and Political Philosophy.” In The Stanford Encyclopedia of Philosophy, edited by Edward N. Zalta, Spring 2015. http://plato.stanford.edu/archives/spr2015/entries/autonomy-moral/.

Dainow, Brandt. 2017. “Threats to Autonomy from Emerging ICTs.” Australasian Journal of Information Systems 21 (0). https://doi.org/10.3127/ajis.v21i0.1438.

Dillahunt, Tawanna R, Christopher A Brooks, and Samarth Gulati. 2015. “Detecting and Visualizing Filter Bubbles in Google and Bing.” In Proceedings of the 33rd Annual ACM Conference Extended Abstracts on Human Factors in Computing Systems, 1851–1856. ACM.

Dworkin, Gerald. 1988. The Theory and Practice of Autonomy. Cambridge Studies in Philosophy. Cambridge; New York: Cambridge University Press.

———. 2015. “The Nature of Autonomy.” Nordic Journal of Studies in Educational Policy 1 (0). https://doi.org/10.3402/nstep.v1.28479.

Ekstrom, Laura Waddell. 1993. “A Coherence Theory of Autonomy.” Philosophy and Phenomenological Research 53 (3): 599–616.

———. 2005. “Autonomy and Personal Integration.” In Personal Autonomy: New Essays on Personal Autonomy and Its Role in Contemporary Moral Philosophy, edited by J. Stacey Taylor. Cambridge University Press.

Frankfurt, Harry G. 1988. The Importance of What We Care about: Philosophical Essays. Cambridge University Press.

Ikonen, Veikko, Minni Kanerva, Panu Kouri, Bernd Stahl, and Kutoma Wakunuma. 2010. “D.1.2. Emerging Technologies Report.” D.1.2. ETICA Project.

Kant, Immanuel. 1998. Groundwork of the Metaphysics of Morals. Translated by Mary J. Gregor. Cambridge Texts in the History of Philosophy. New York: Cambridge University Press.

Mackenzie, Catriona, and Natalie Stoljar, eds. 2000. Relational Autonomy: Feminist Perspectives on Autonomy, Agency, and the Social Self. New York: Oxford University Press.

Meyers, Diana T. 2005. “Decentralizing Autonomy: Five Faces of Selfhood.” In Autonomy and the Challenges to Liberalism, edited by John Christman and Joel Anderson, 27–55. Cambridge: Cambridge University Press. http://ebooks.cambridge.org/ref/id/CBO9780511610325A011.

Niebuhr, R. 2004. The Nature and Destiny of Man: A Christian Interpretation: Human Nature. Library of Theological Ethics. Westminster, John Knox Press.

Schneewind, Jerome B. 2007. The Invention of Autonomy: A History of Modern Moral Philosophy. 7th ed. Cambridge: Cambridge Univ. Press.

Stoycheff, E. 2016. “Under Surveillance: Examining Facebooks Spiral of Silence Effects in the Wake of NSA Internet Monitoring.” Journalism & Mass Communication Quarterly, March. https://doi.org/10.1177/1077699016630255.

Wendling, Patrice. 2017. “Abbott Hit With $9.9 Million Class-Action Over St Jude Devices.” News. Medscape. 2017. http://www.medscape.com/viewarticle/886026.